The Google AI Agents Intensive Course (5DGAI) debuted in March 2025 (First 5DGAI) and returned in November 2025 (second 5DGAI), offering developers a front-row seat to the rapid evolution of agentic systems. The First 5DGAI course focused on foundational skills: writing prompts, training agents, customizing them using Retrieval-Augmented Generation (RAG), and deploying them via MLOps. Participants learned to fine-tune models and integrate external knowledge sources to improve agent performance.

By November, the second 5DGAI curriculum had advanced significantly. Developers were trained to build autonomous agents capable of managing other agents and tools and deploying them via Agent Ops (ADK, Vertex AI, Kubernetes), a shift that reflects the growing complexity of real-world AI deployments. The November 2025 “Introduction to Agents” White Paper introduced a more formalized framework for understanding these systems, especially in the section titled “Taxonomy of Agentic Systems” (pages 14–18).

Taxonomy of Agentic Systems

The taxonomy outlined in the November 2025 white paper breaks down agentic systems into five key levels:

- Level 0: Core Reasoning System

A standalone language model that relies solely on its pre-trained knowledge. It can explain concepts and plan solutions but lacks real-time awareness or access to external data. (e.g. ChatGPT-3 from 2022-2023)

- Level 1: Connected Problem-Solver

The agent gains tool access, allowing it to fetch real-time data and interact with external systems (e.g., APIs, RAG). It can now solve dynamic problems beyond its training set. (e.g. ChatGPT-4 in 2023, with plugins, browsing, code interpreter) - Level 2: Strategic Problem-Solver

Introduces context engineering—agents can plan multi-step tasks, curate relevant information, and execute complex missions with precision and adaptability. (e.g. Gemini 1.5 Pro, GPT-4 Turbo in 2024 with memory and tool chaining) - Level 3: Collaborative Multi-Agent System

Agents work as a team, delegating tasks to specialized sub-agents. This mirrors human organizational structures and enables scalable, parallel workflows. (e.g. Google DeepMind’s multi-agent demos, OpenAI Dev Day agent frameworks, from late 2024–2025) - Level 4: Self-Evolving System

Agents autonomously identify capability gaps and create new agents or tools to fill them. This marks the emergence of adaptive, self-improving agent ecosystems. (No verified examples in 2025)

Together, these five levels illustrate a clear progression in agentic system design, from simple, isolated reasoning engines to dynamic, self-evolving ecosystems. Each level builds on the previous by adding new capabilities: tool use, strategic planning, collaboration, and autonomous expansion. This taxonomy helps developers and architects scope their agent designs based on mission complexity, resource needs, and desired autonomy. As systems advance toward Level 4, they begin to resemble adaptive organizations capable of learning, growing, and responding intelligently to new challenges.

Agents Use in Data Visitation and Security Concerns

As agents gain access to sensitive data and tools, security becomes a central concern. During the Day 2 live-stream, Alex Wissner-Gross—a renowned scientist and entrepreneur—highlighted the risks and proposed a compelling vision:

“I foresee an internet of agents, with one singleton agent per corporation who shares secrets with sub-agents but does not expose them to the outside.”

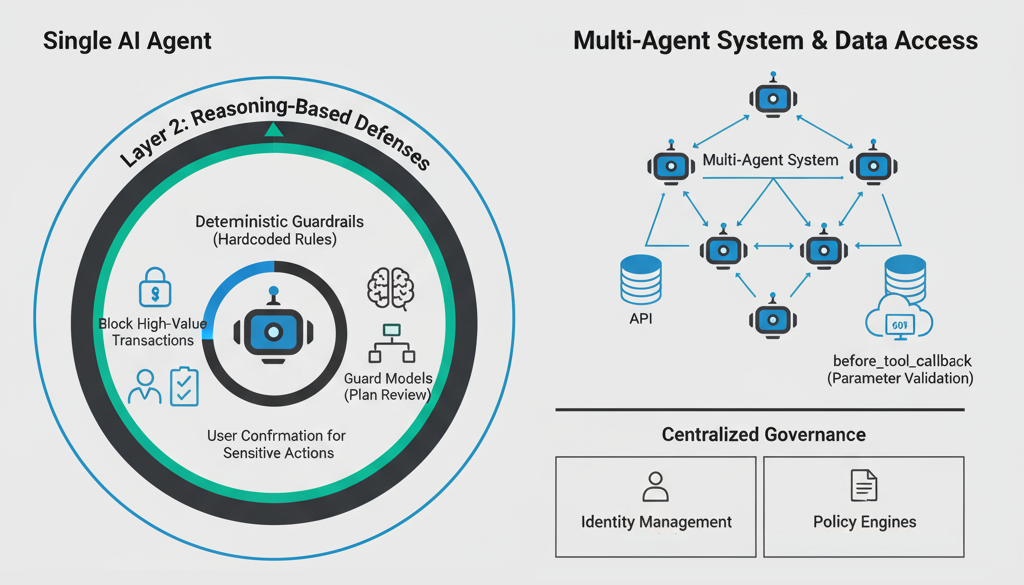

This model emphasizes containment and controlled delegation. In data visitation platforms, where agents interact with private datasets, even a single agent with decision-making authority must be carefully sandboxed. The white paper warns that tool access and autonomy introduce a delicate balance between utility and risk, especially when agents operate across organizational boundaries.

To mitigate these risks, the paper recommends:

- Role-based access control for agents

- Audit trails for tool invocation and data access

- Memory partitioning to prevent leakage across tasks

- Blocking prompt injections through new methods like adversarial training and specialized security analysts agents

These strategies are essential for building trustworthy multi-agent ecosystems, especially in enterprise and government settings.

Final Thoughts

The Google AI Agents Intensive Course not only reflects the technical progress in agent development but also surfaces the ethical and operational challenges of deploying autonomous systems. As we move toward an internet of agents, frameworks like the Taxonomy of Agentic Systems and security models proposed by experts will be critical in shaping a safe and scalable future.