In 2026, even the traditionally cautious GitHub Copilot in VS Code has clearly shifted toward greater agent autonomy, with features like (/autoApprove or /yolo). This evolution builds on a turning point from late 2024, when developer tools first crossed a threshold: AI assistants stopped being passive autocomplete helpers and began acting like semi‑autonomous agents. The clearest symbol of this shift is the rise of YOLO mode, first popularized in Cursor in 2024, then in Claude Code in 2025 (–dangerously-skip-permissions), and now, in 2026, this is echoed in VS Code GitHub Copilot’s evolving agent features.

Where YOLO Mode Started: Cursor’s Shadow Workspace

Cursor introduced “YOLO mode” as part of its Shadow Workspace, a feature that lets the AI freely modify multiple files, refactor entire subsystems, and run multi‑step changes without waiting for line‑by‑line approval. Developers quickly realized that enabling YOLO mode meant giving the agent permission to “just go for it”, taking bold, sweeping actions across the codebase.

The name wasn’t accidental. In early community discussions, users joked that “you only live once” was the perfect motto for letting an AI take bigger risks. The term stuck, and YOLO mode became shorthand for high‑autonomy coding assistance.

In 2025, most AI IDEs coding assistants, such as GitHub Copilot, were not yet encouraging autonomous behavior, even as they introduced the YOLO mode as an “experimental switch”. Autonomy was still met with skepticism, and early YOLO mode experiments often produced unstable or buggy code.

The 2026 Shift: From Autocomplete to Agents

By early 2026, the entire industry had moved toward more autonomous workflows. Analysts and developer communities noted that the real shift wasn’t better autocomplete, it was repo‑aware agents capable of multi‑file reasoning, refactoring, and long‑horizon tasks.

Cursor leaned into this trend early, but others soon followed.

GitHub Copilot’s 2026 Evolution

In February 2026, GitHub released major updates to Copilot in VS Code (v1.110), expanding its agent‑like capabilities. While not branded as “YOLO mode,” the new global auto approval feature let agents run within boundaries within a terminal sandbox and is toggled with /autoApprove or /yolo directly in the chat input

The new features aligned with the same philosophy:

- deeper workspace awareness

- multi‑step edits

- more autonomous refactoring

- agent‑driven workflows integrated directly into the IDE

These updates marked GitHub’s move toward the same autonomy frontier Cursor had been exploring.

Why YOLO Mode Matters

YOLO mode represents a cultural and technical shift:

- From: autocomplete and suggestions

- To: agents that can plan, modify, and execute complex changes

- From: human‑in‑the‑loop at every step

- To: human‑on‑the‑loop oversight with selective intervention

Developers increasingly trust agents to take initiative and tools are evolving to match that trust.

Warning: What is the Impact on Software Quality?

A recent 2026 study by Carnegie Mellon University analyzed 518 Github repositories (matched to 679 controls) and found that autonomous agents offer meaningful velocity gains only in code repositories that have not previously used any AI, while consistently raising complexity and warning levels across contexts, indicating sustained agent-induced technical debt even as velocity advantages fade. The study, however, did not investigate human-on-the-loop scenarios using AI IDEs and the impact of varying levels of human software development expertise in the human interaction with agents.

Why this matters in Data Sharing, Data Quality Control, and Data Access?

As we summarized in our previous blog post on problematic EHR data, there are troves of valuable datasets for biomedical research that contain sensitive data, are managed in restricted-access mode, and require intelligent processing to be curated, cleaned and analyzed.

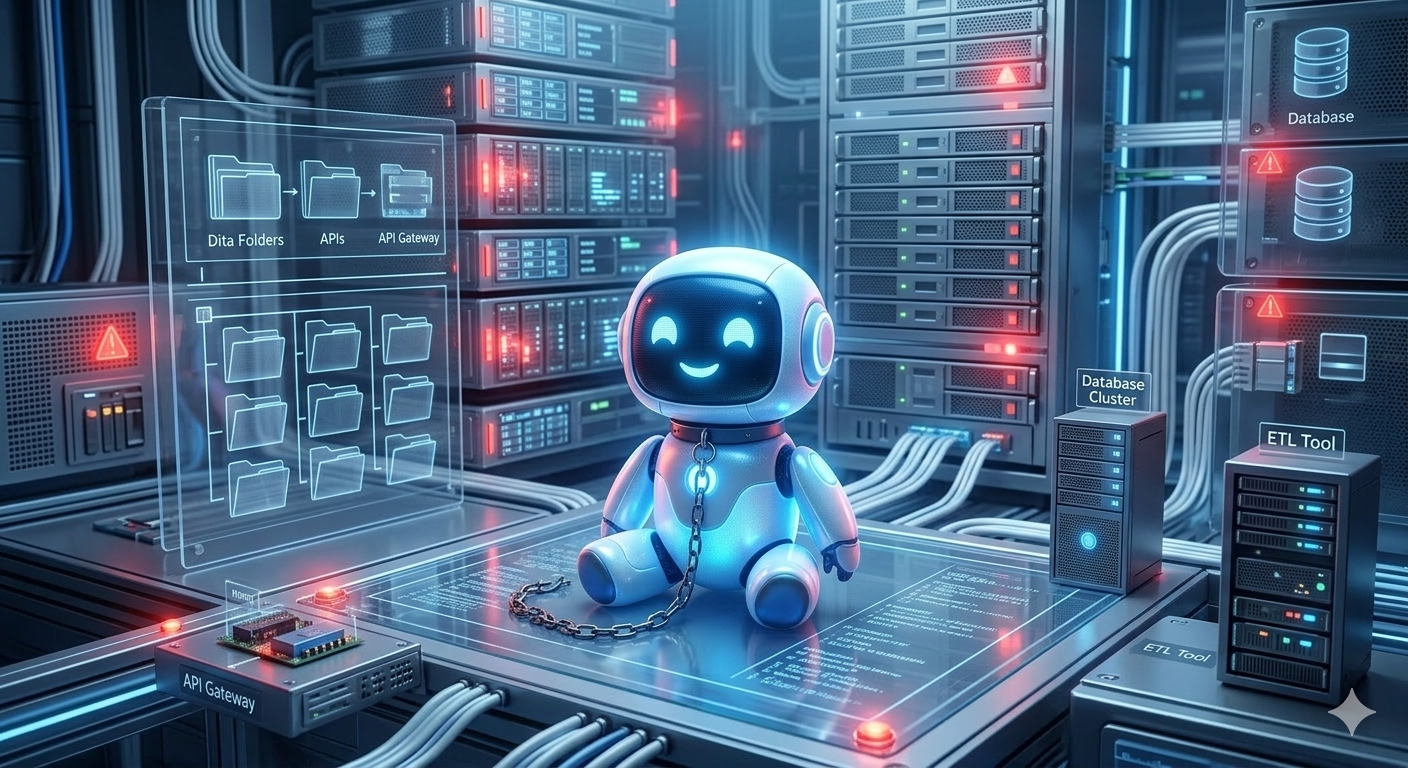

As the tools used by human software developers become more autonomous, and simultaneously more accessible to non‑programmers tasked with either building data‑accessing or data-analysis tools, we need guardrails at two levels:

• The agent technology itself

• The human‑in‑the‑loop who supervises and approves its actions

These guardrails were discussed at the Research Data Alliance 26th Plenary Meeting (VP26), session “Agents at the Gate”: